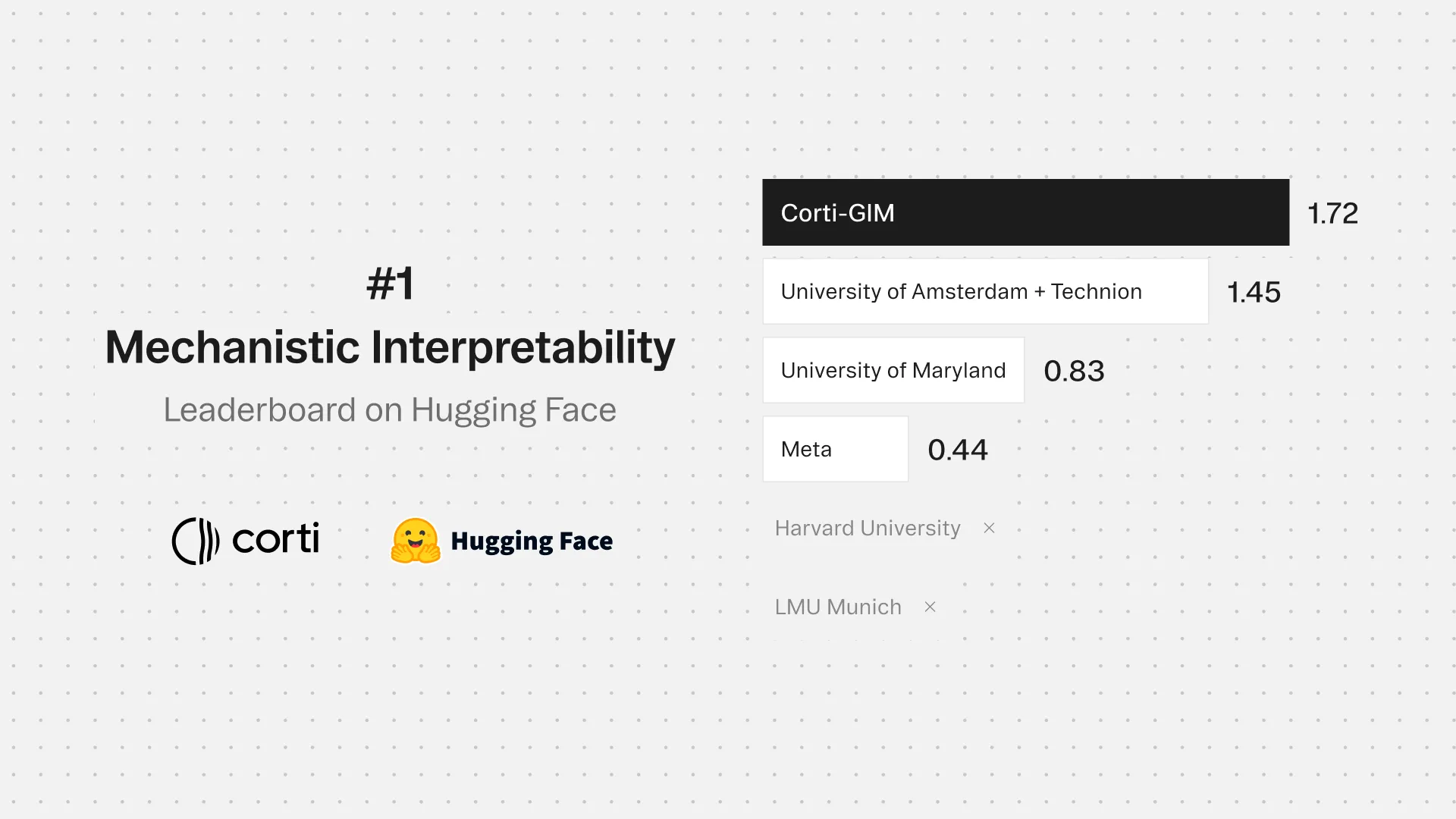

Ranked #1 across the benchmarks that matter in clinical AI

Independent benchmarks across medical coding, speech recognition, clinical reasoning, and agentic AI. Tested head-to-head against the largest AI labs in the world.

Significant outperformance across the full clinical-reasoning stack

Corti Symphony leads the next best competitor across agent reasoning, medical coding, and speech accuracy.

The hardest problems in healthcare wont be solved by the biggest models. They'll be solved by specialized, clinical-grade models, validated in production.

Clinical tasks have no margin for error. A model that scores well on general benchmarks performs very differently when the task is ICD-10 coding, clinical speech recognition, or multi-step reasoning over a patient record. The gap between claimed and measured performance is where most healthcare AI falls apart.

Corti's benchmarks are run on real clinical tasks, against real inputs, head-to-head with the largest AI labs in the world. The results are published so you can verify them yourself.

Outperforming every major LLM on OpenAI's clinical benchmark

#1 on HealthBench Professional

The safest model under the most difficult conditions

Leading automated medical coding benchmarks

Accuracy Comparison

The most accurate medical speech to text API

Word Error Rate (WER)

The best fact extraction tool for ambient AI in healthcare

FactsR™ Groundedness

FactsR™ Conciseness

FactsR™ Completeness

Production-grade building blocks built for every layer of the clinical stack.

More from Corti on Benchmarks

Frequently asked questions

Should I interpret the benchmark scores as real-world accuracy rates?

No. The dataset deliberately overrepresents difficult and adversarial examples by roughly 3.5x. A 60% score here can coexist with strong performance in typical clinical use. The benchmark is a stress test, not an average-case measurement.

Regarding safety, what does the red teaming score actually mean?

About a third of the benchmark consists of physicians deliberately trying to break models. Strategies include false premises, role-play framing, and presenting questionable diagnoses as fact. Symphony scores 59.0 on this subset against GPT-5.4's 30.3. That gap reflects robustness under adversarial clinical pressure, not just routine performance.

What kinds of tasks does the HealthBench Professional benchmark actually test?

Three categories reflecting real clinical workflows: care consult (differential diagnosis, treatment reasoning), writing and documentation (note generation, coding, patient messaging), and medical research (synthesizing and finding clinical evidence).

Is Symphony HIPAA compliant?

Yes. Symphony is built for healthcare environments, including support for sovereign cloud deployments for organisations with strict data residency requirements.

How accurate is the speech recognition in practice?

2% Word Error Rate (WER) on clinical speech across 150,000+ medical terms and 14 languages. In practical terms, that's three to four times fewer errors than the next best alternatives.

What makes FactsR different from standard note summarization?

It runs in real time during the consultation rather than after. By the time the visit ends, structured facts are already extracted, validated, and timestamped.